Oracle has announced it will be one of the first hyperscale cloud providers to offer artificial intelligence (AI) supercomputing powered by AMD's Instinct MI355X GPUs on Oracle Cloud Infrastructure (OCI).

The forthcoming zettascale AI cluster is designed to scale up to 131,072 MI355X GPUs, specifically architected to support high-performance, production-grade AI training, inference, and new agentic workloads. The cluster is expected to offer over double the price-performance compared to the previous generation of hardware.

Expanded AI capabilities

The new announcement highlights several key hardware and performance enhancements. The MI355X-powered cluster provides 2.8 times higher throughput for AI workloads. Each GPU features 288 GB of high-bandwidth memory (HBM3) and eight terabytes per second (TB/s) of memory bandwidth, allowing for the execution of larger models entirely in memory and boosting both inference and training speeds.

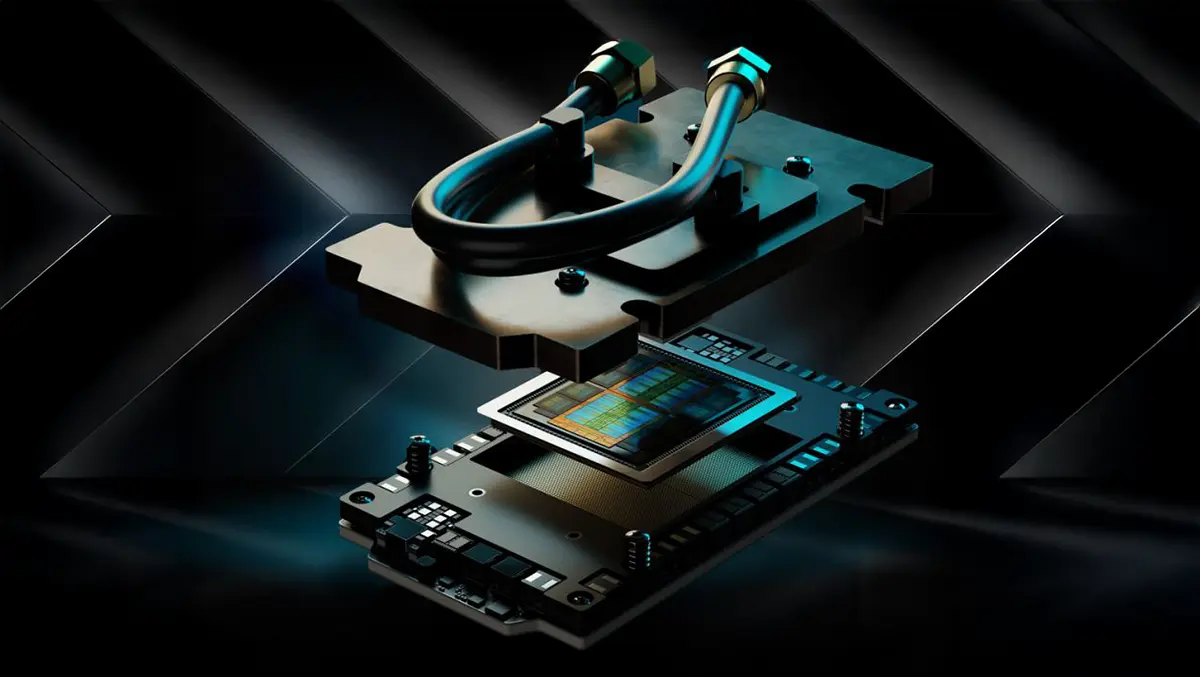

The GPUs also support the FP4 compute standard, a four-bit floating point format that enables more efficient and high-speed inference for large language and generative AI models. The cluster's infrastructure includes dense, liquid-cooled racks, each housing 64 GPUs and consuming up to 125 kilowatts per rack to maximise performance density for demanding AI workloads. This marks the first deployment of AMD's Pollara AI NICs to enhance RDMA networking, offering next-generation high-performance and low-latency connectivity.

Mahesh Thiagarajan, Executive Vice President, Oracle Cloud Infrastructure, said:

"To support customers that are running the most demanding AI workloads in the cloud, we are dedicated to providing the broadest AI infrastructure offerings. AMD Instinct GPUs, paired with OCI's performance, advanced networking, flexibility, security, and scale, will help our customers meet their inference and training needs for AI workloads and new agentic applications."

The zettascale OCI Supercluster with AMD Instinct MI355X GPUs delivers a high-throughput, ultra-low latency RDMA cluster network architecture for up to 131,072 MI355X GPUs. AMD claims the MI355X provides almost three times the compute power and a 50 percent increase in high-bandwidth memory over its predecessor.

Performance and flexibility

Forrest Norrod, Executive Vice President and General Manager, Data Center Solutions Business Group, AMD, commented on the partnership, stating:

"AMD and Oracle have a shared history of providing customers with open solutions to accommodate high performance, efficiency, and greater system design flexibility. The latest generation of AMD Instinct GPUs and Pollara NICs on OCI will help support new use cases in inference, fine-tuning, and training, offering more choice to customers as AI adoption grows."

The Oracle platform aims to support customers running the largest language models and diverse AI workloads. OCI users leveraging the MI355X-powered shapes can expect significant performance increases—up to 2.8 times greater throughput—resulting in faster results, lower latency, and the capability to run larger models.

AMD's Instinct MI355X provides customers with substantial memory and bandwidth enhancements, which are designed to enable both fast training and efficient inference for demanding AI applications. The new support for the FP4 format allows for cost-effective deployment of modern AI models, enhancing speed and reducing hardware requirements.

The dense, liquid-cooled infrastructure supports 64 GPUs per rack, each operating at up to 1,400 watts, and is engineered to optimise training times and throughput while reducing latency. A powerful head node, equipped with an AMD Turin high-frequency CPU and up to 3 TB of system memory, is included to help users maximise GPU performance via efficient job orchestration and data processing.

Open-source and network advances

AMD emphasises broad compatibility and customer flexibility through the inclusion of its open-source ROCm stack. This allows customers to use flexible architectures and reuse existing code without vendor lock-in, with ROCm encompassing popular programming models, tools, compilers, libraries, and runtimes for AI and high-performance computing development on AMD hardware.

Network infrastructure for the new supercluster will feature AMD's Pollara AI NICs that provide advanced RDMA over Converged Ethernet (RoCE) features, programmable congestion control, and support for open standards from the Ultra Ethernet Consortium to facilitate low-latency, high-performance connectivity among large numbers of GPUs.

The new Oracle-AMD collaboration is expected to provide organisations with enhanced capacity to run complex AI models, speed up inference times, and scale up production-grade AI workloads economically and efficiently.